|

NeuZephyr

Simple DL Framework

|

|

NeuZephyr

Simple DL Framework

|

Represents an Exponential Linear Unit (ELU) activation function node in a computational graph. More...

Public Member Functions | |

| ELUNode (Node *input, Tensor::value_type alpha=1.0f) | |

Constructor to initialize an ELUNode for applying the ELU activation function. | |

| void | forward () override |

Forward pass for the ELUNode to apply the ELU activation function. | |

| void | backward () override |

Backward pass for the ELUNode to compute gradients. | |

Public Member Functions inherited from nz::nodes::Node Public Member Functions inherited from nz::nodes::Node | |

| virtual void | print (std::ostream &os) const |

| Prints the type, data, and gradient of the node. | |

| void | dataInject (Tensor::value_type *data, bool grad=false) const |

| Injects data into a relevant tensor object, optionally setting its gradient requirement. | |

| template<typename Iterator > | |

| void | dataInject (Iterator begin, Iterator end, const bool grad=false) const |

| Injects data from an iterator range into the output tensor of the InputNode, optionally setting its gradient requirement. | |

| void | dataInject (const std::initializer_list< Tensor::value_type > &data, bool grad=false) const |

| Injects data from a std::initializer_list into the output tensor of the Node, optionally setting its gradient requirement. | |

Represents an Exponential Linear Unit (ELU) activation function node in a computational graph.

The ELUNode class applies the Exponential Linear Unit (ELU) activation function to the input tensor. The ELU function is defined as:

where alpha controls the value for negative inputs and smoothens the curve.

Key features:

alpha.This class is part of the nz::nodes namespace and is often used to improve the learning dynamics in deep networks by reducing the vanishing gradient problem for negative inputs.

alpha parameter defaults to 1.0, but can be customized during construction to control the behavior for negative inputs.

|

explicit |

Constructor to initialize an ELUNode for applying the ELU activation function.

The constructor initializes an ELUNode, which applies the Exponential Linear Unit (ELU) activation function to an input tensor. It establishes a connection to the input node, initializes the output tensor, and sets the alpha parameter and node type.

| input | A pointer to the input node. Its output tensor will have the ELU activation applied. |

| alpha | The scaling parameter for negative input values. Defaults to 1.0. |

inputs vector to establish the connection in the computational graph.output tensor is initialized with the same shape as the input tensor, and its gradient tracking is determined based on the input tensor's requirements.alpha parameter controls the scaling for negative input values, influencing the gradient flow and smoothness of the activation.

|

overridevirtual |

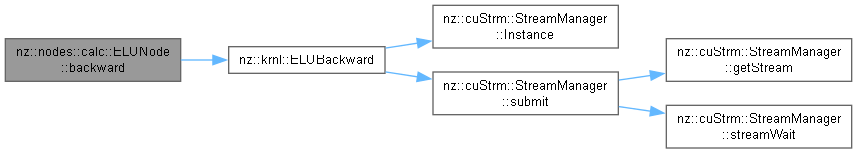

Backward pass for the ELUNode to compute gradients.

The backward() method computes the gradient of the loss with respect to the input tensor by applying the derivative of the ELU activation function. The gradient computation is based on the formula:

where alpha is the scaling parameter for negative input values.

ELUBackward) is launched to compute the gradients in parallel on the GPU.output tensor to compute the input gradient.alpha parameter, provided during construction, controls the gradient scale for negative input values.requiresGrad property is true.Implements nz::nodes::Node.

Definition at line 467 of file Nodes.cu.

|

overridevirtual |

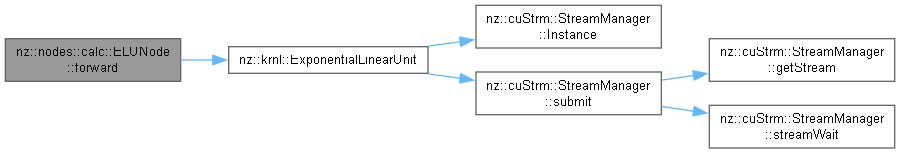

Forward pass for the ELUNode to apply the ELU activation function.

The forward() method applies the Exponential Linear Unit (ELU) activation function element-wise to the input tensor. The result is stored in the output tensor. The ELU function is defined as:

ExponentialLinearUnit) is launched to compute the activation function in parallel on the GPU.output tensor to ensure efficient GPU utilization.alpha parameter, provided during construction, scales the output for negative input values.Implements nz::nodes::Node.

Definition at line 461 of file Nodes.cu.